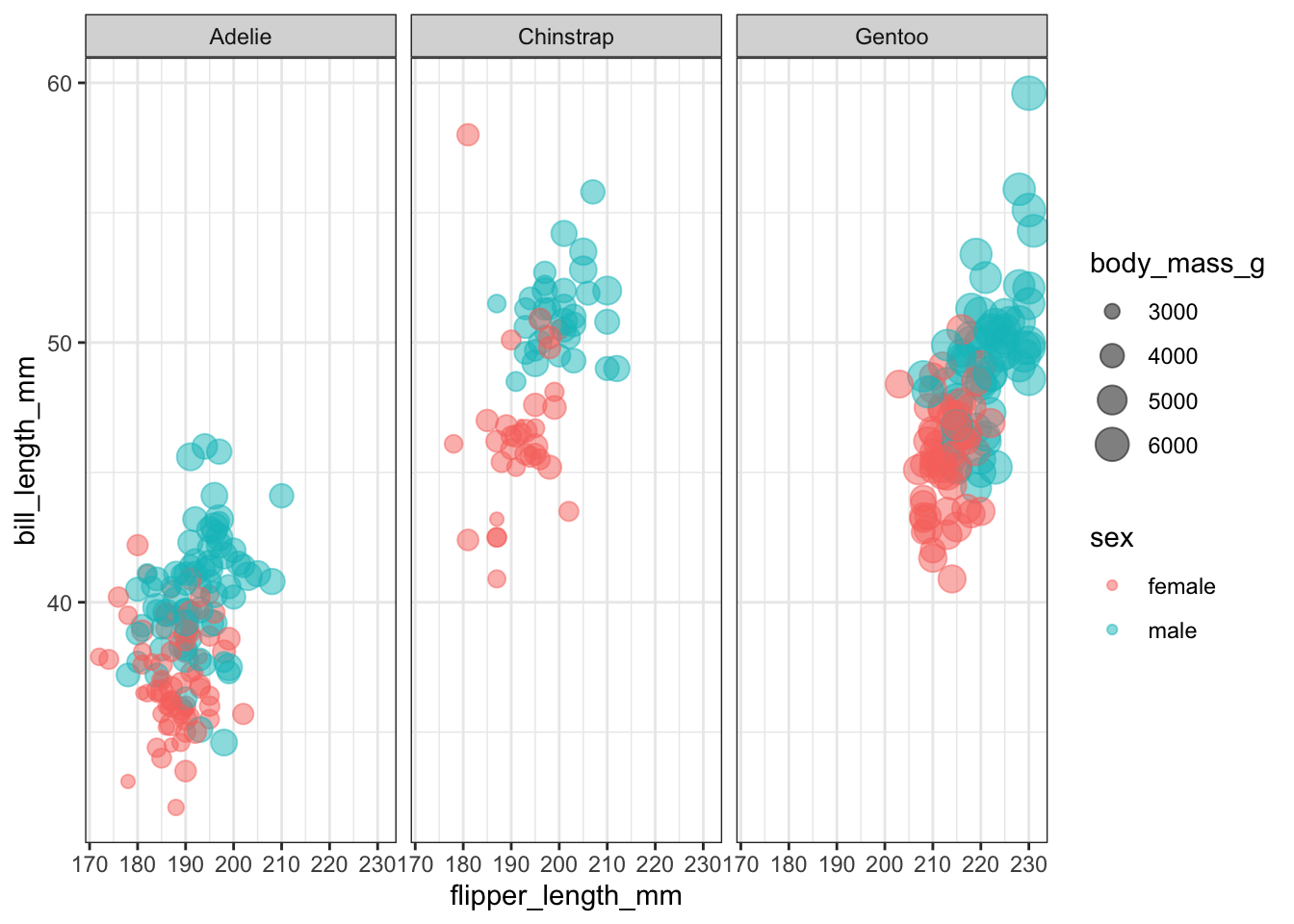

Rows: 10

Columns: 7

$ species <fct> Adelie, Adelie, Adelie, Adelie, Adelie, Adelie, Adel…

$ bill_length_mm <dbl> 39.1, 39.5, 40.3, 36.7, 39.3, 38.9, 39.2, 41.1, 38.6…

$ bill_depth_mm <dbl> 18.7, 17.4, 18.0, 19.3, 20.6, 17.8, 19.6, 17.6, 21.2…

$ flipper_length_mm <int> 181, 186, 195, 193, 190, 181, 195, 182, 191, 198

$ body_mass_g <int> 3750, 3800, 3250, 3450, 3650, 3625, 4675, 3200, 3800…

$ sex <fct> male, female, female, female, male, female, male, fe…

$ .pred_class <fct> male, female, female, female, male, female, male, fe…